This How-To will teach you how to upload data to a Wendelin instance.

Step1: Create needed Portal Ingestion Policy which is the URL to which you will later ingest data.

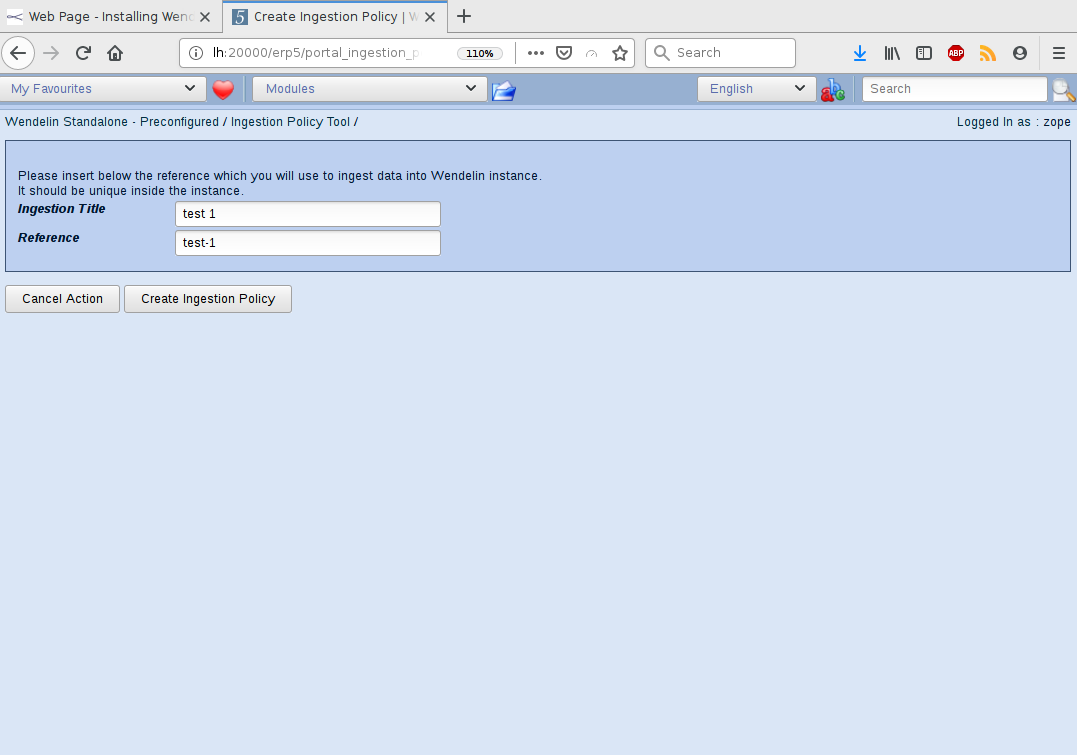

To do so go to your Wendelin instance, then select "Manage Ingestion Policies" from top left drop down which will lead you to the next page where you have to select the "Green" running man icon. As show below do enter reference and title for you Ingestion Policy.

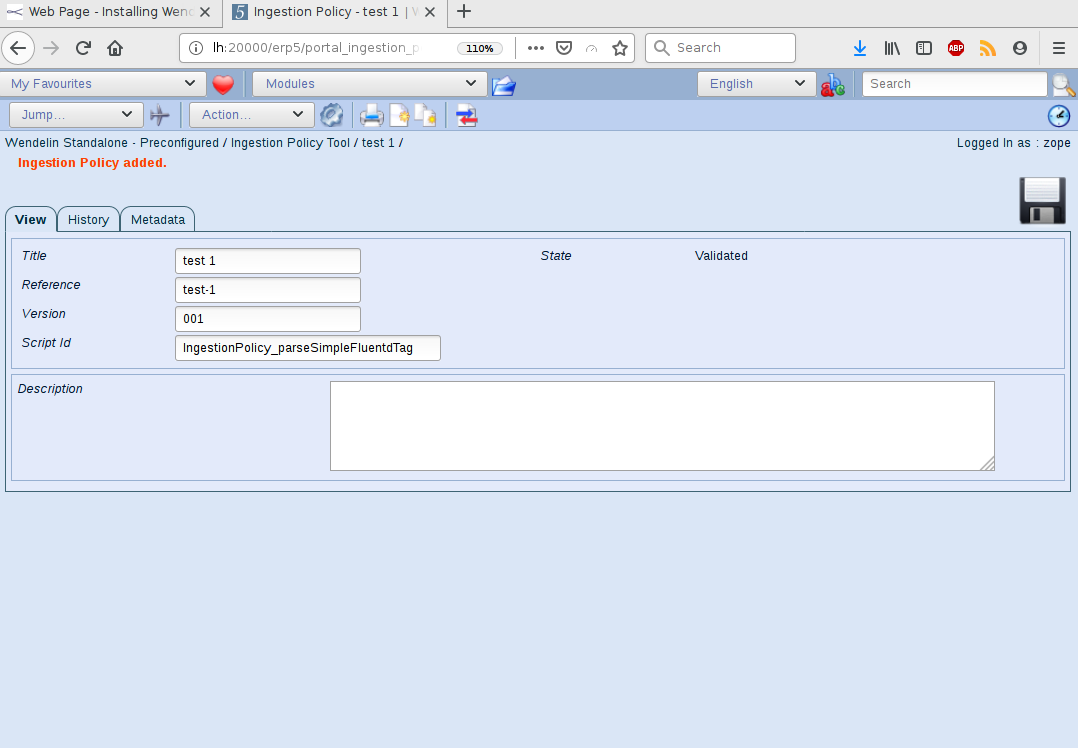

After pressing the button you will be redirected newly created Ingestion Policy. So far this is everything one needs to do server side. Go to Step 2.

Step 2: Ingest data in Wendelin

We have developed a Python script which you may use.

ivan@l540:~/tmp$ git clone https://lab.nexedi.com/nexedi/wendelin

ivan@l540:~/tmp$ cd wendelin/utils/

# do adjust script with proper username / password, URL to access instance and Ingestion Policy reference

ivan@l540:~/tmp/wendelin/utils$ python sample_wendelin_upload.py

The script will print out success code (204) or failure. If it fails do check you used proper settings in script!

Step 3: How to get to uploaded data in Wendelin instance.

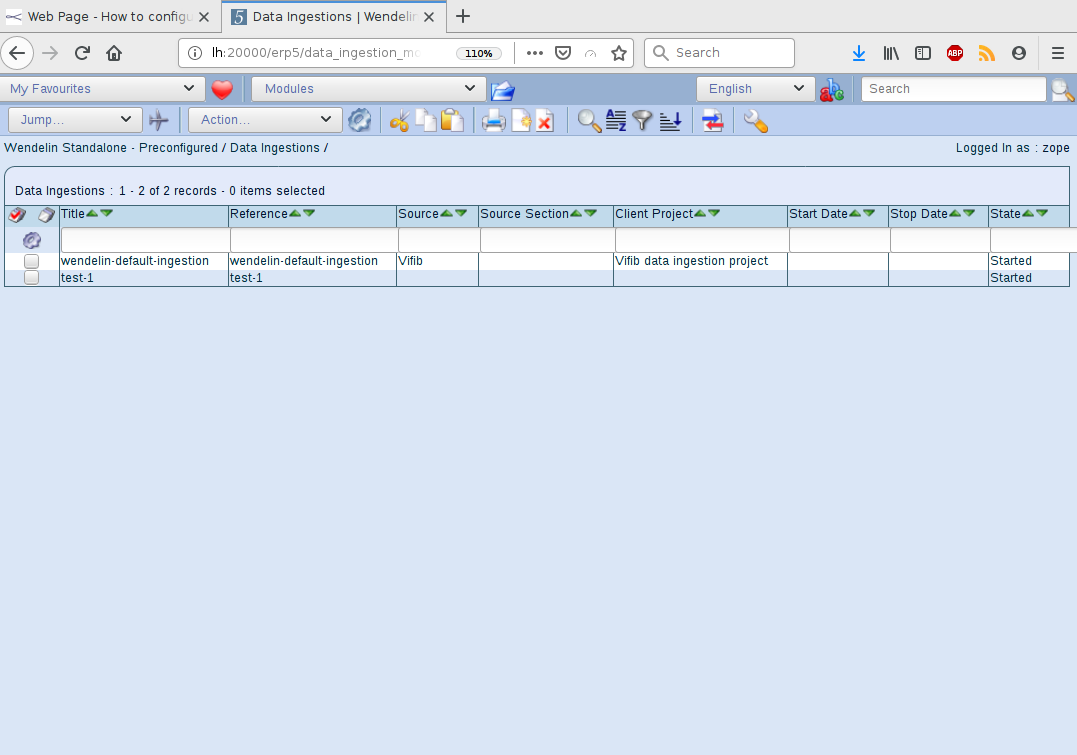

The ingestion into the system is tracked by an instance of Data Ingestion portal type. In step 1 we used a reference ("test-1") thus we need to go to "Data Ingestion Module" which normally has this content: (picture below). Click on "test-1" will lead us to the respective Data Ingestion.

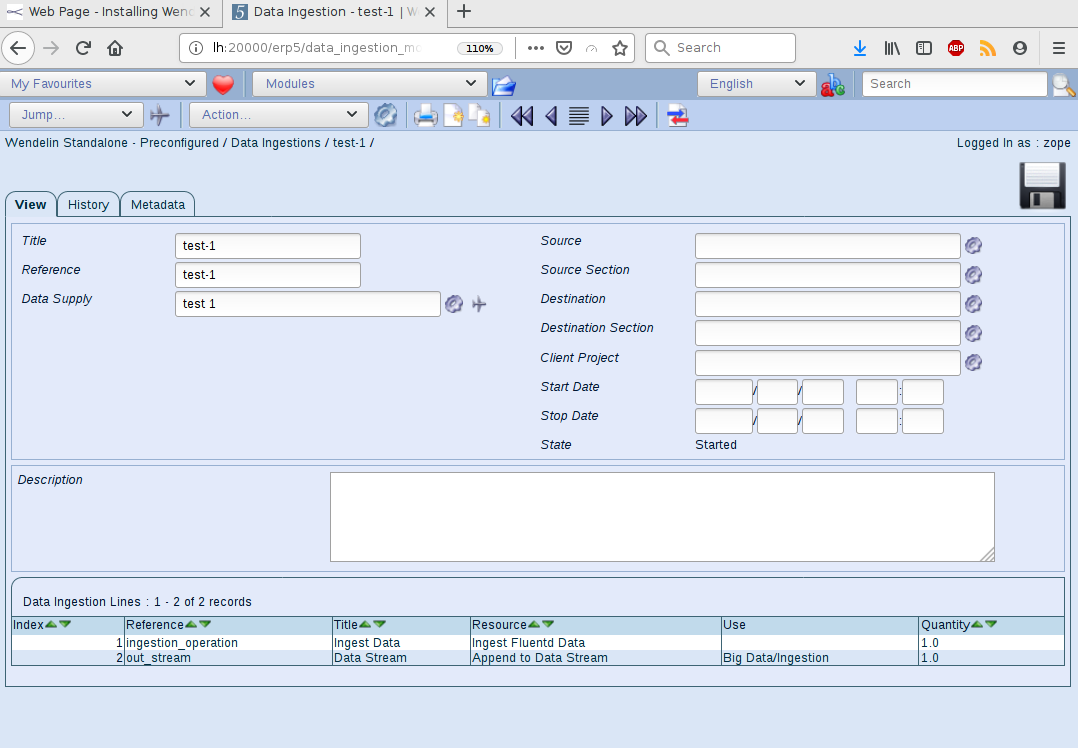

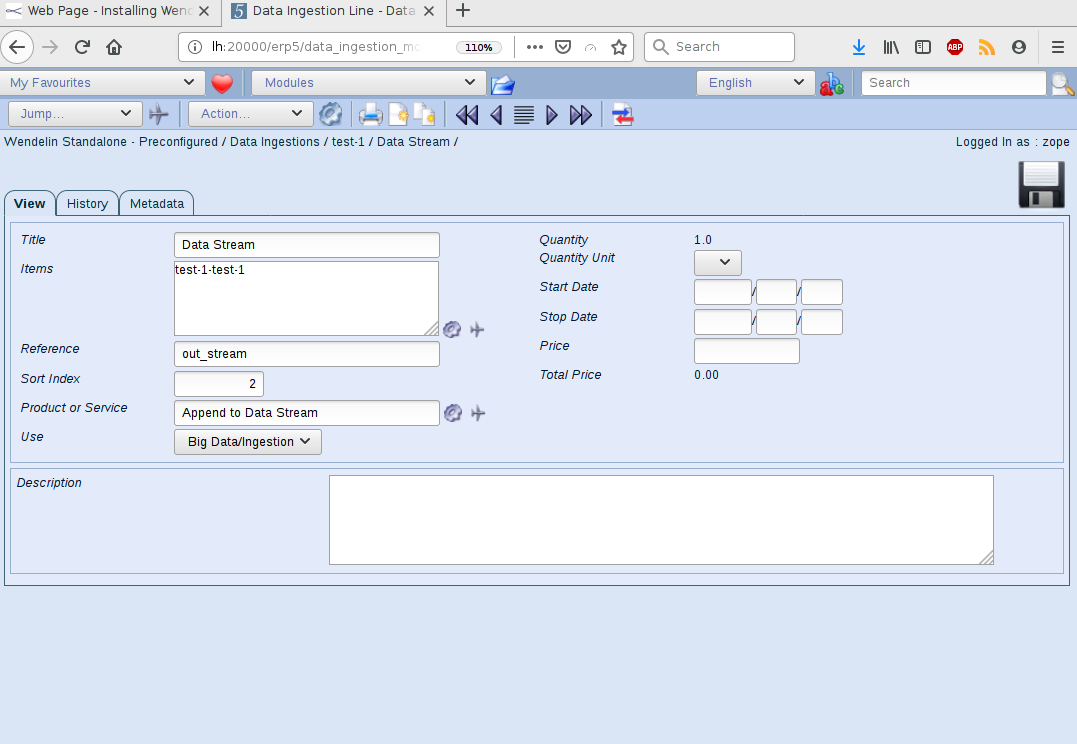

The way data is ingested is defined by Data Ingestion Lines. It can be quite a complex process but for now we are only interested in "out_stream" thus click on it.

While on Data Ingestion Line view we can see "Items" text area field with "test-1 test-1" Item. This is actually where the data is being stored in so called "Data Streams". Clicking on the "air plane" icon will lead us to the Data Stream which contains freshly ingested data.

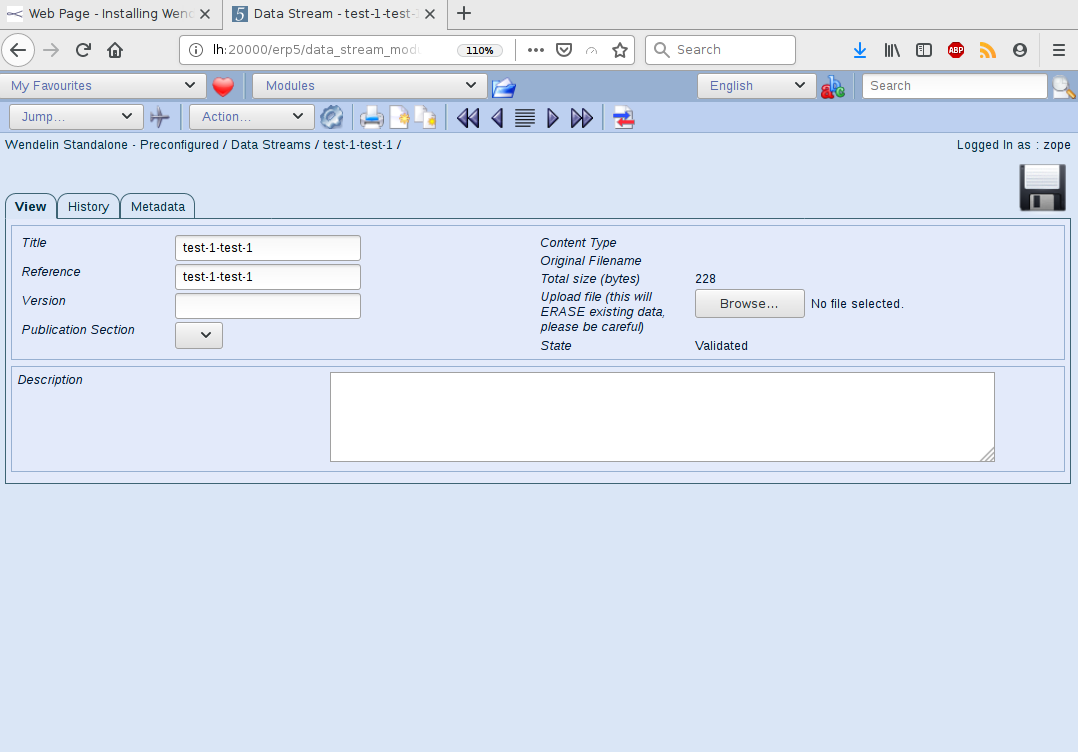

On next image one can see the Data Stream. We already have uploaded some data (see "Total size (bytes)".

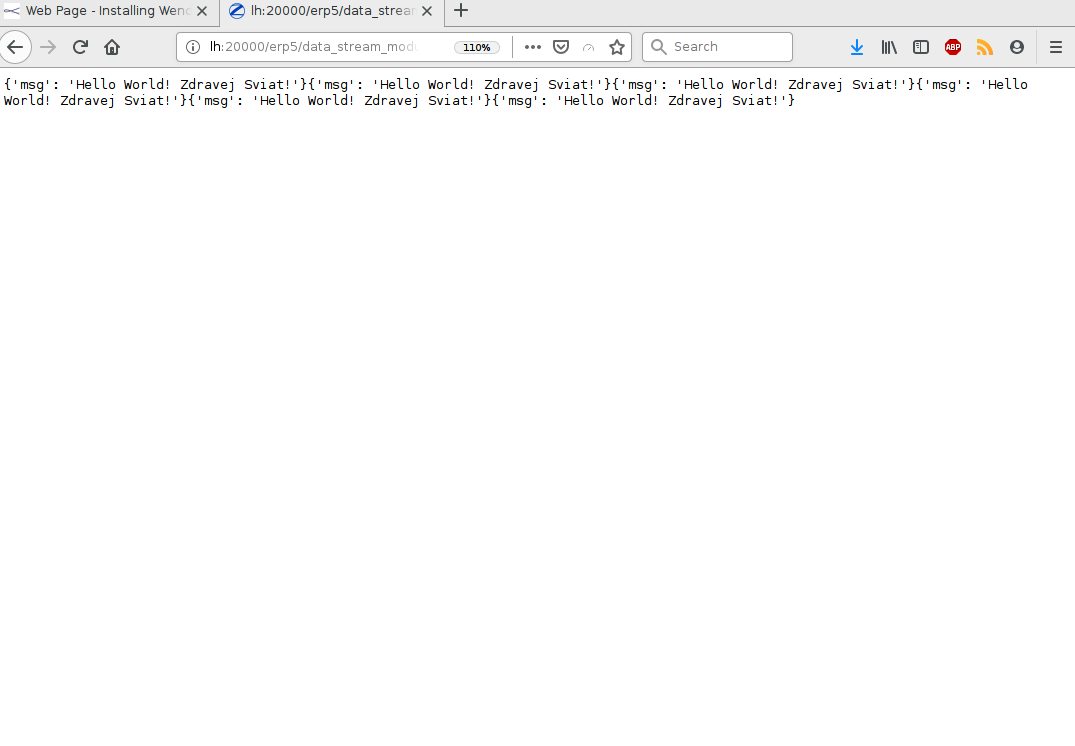

In order to get the data in our browser we can append to Data Stream's URL the following method : "/getData" which will show that we have uploaded for few times data from our sample script.

Ingest using fluentd

ivan@z600:~$ gem install --user-install fluentd

ivan@z600:~$ gem install --user-install fluent-plugin-wendelin

ivan@z600:~$ git clone https://lab.nexedi.com/nexedi/wendelin.git

ivan@z600:~$ ~/.gem/ruby/2.3.0/bin/fluentd -vv -c wendelin/utils/fluentd-wendelin.conf

# by appending data to test.log we can see that within few seconds data is appended to respective Data Stream server side!

ivan@z600:~$ echo "111111111111111111111" >> test.log

# .. or one can create and run a script that can record data every 2 seconds

ivan@z600:~$ vi repeat.sh

#!/bin/bash

while true; do

sleep 2

echo `date` >> test.log

done

This is a very simple tutorial. Next one will show how to access data and do some training on it.